Google admitted that the speed is a determining factor for the positioning of a site. For this reason, and to meet the needs of SEO experts, the Mountain View giant introduced in the Webmaster Tools, today Search Console, a new tool called Page Speed Insight. This tool allows you to analyze the performance problems of a Web page in an intuitive way and directly from the Mozilla Firefox browser. The tool, in addition to reporting potential problems, also gives tips and useful functions to solve them.

Some of the flaws encountered with Page Speed are easily solved during the design phase of a site's frontend. Others, on the other hand, need some more system-level knowledge.

Going into more detail, the factors that may depend on the system configurations are:

- caching;

- compression;

Those relating to the frontend and the structuring of the site, on the other hand, are:

- reduction of DNS resolutions and Redirects

- minification of Css / Js / Html files;

- image optimization;

- order in which page elements are loaded;

- parallelization and reduction of Http requests.

- Let's analyze these aspects in detail.

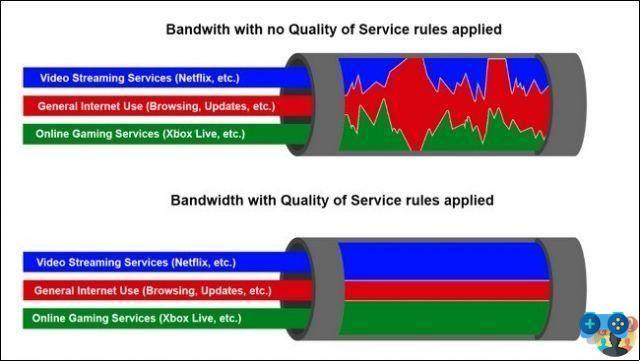

Phase one: Caching

The vast majority of web pages have resources within them such as files javascript, Css, images that make up the layout and other types of files that are rarely changed. Although these resources change, in fact, rarely, every time a user requests the web page with his browser, they are, however, downloaded again. This increases the page load time. Well theHttp Caching allows you to save these resources in the browser of the user who visits the site or in the proxy servers of the provider. The result is that, for example, the cached image will no longer be served from the website, but directly from the user's PC or from the Proxy.

This gives a double advantage: it reduces the round-trip time (the time it takes for data to go back and forth from a client to a server), eliminating many Http requests necessary to obtain all the resources and, consequently, reduces the size. overall response from the server. Reducing the total page weight for each returning visitor also significantly reduces bandwidth usage and site loading costs.

Compression in progress ..

Compression of web pages can be achieved, using systems gzip o deflate. All modern browsers in circulation, in fact, support the compression of Html, Css and Javascript files and, therefore, allow you to send smaller files on the Net without losing information. The compression process takes place on the server side, by enabling some modules, or by using specific scripts.

Compression is recommended for not too small resources, as this process has its own load on the machine. The ideal solution, therefore, is to combine it with caching mechanisms. Another aspect to keep in mind is to avoid the compression of files in binary format (such as images, videos, archives and pdfs) and, therefore, practically already compressed.

DNS and Redirect resolutions

The page download time is also significantly affected by the number of resolutions DNS and the number of Redirect. Let's see in detail what it is:

La DNS resolution it is the time taken by the browser to identify the origin of each single hostname serving the resources, to which is added the delay caused by the round-trip time. To be clear, the use of many widgets within the page causes a strong increase in DNS resolutions. Consequently, it is good to use these objects with awareness and only if they actually benefit visitors.

The same goes of course for the redirect: it is advisable to try to include resources that do not refer to others. It must be said that, in some cases, such as sites that include many videos and images, you can benefit from the use of different hostnames, because the DNS resolution time is compensated by the parallelization of the download of multiple resources. Therefore, it is necessary to make the right assessments based on every need.

Parallelization

As recommended by Google, the optimal number of hosts to use is between 1 and 5 (distributed so that there is a main host and four from which to download the "cacheable" resources) and the ratio between the number of hosts and resources must be 1 a 6. So, never use multiple hostnames if the number of resources used by the page is less than six. For example, it is possible to hypothesize that a site that uses a CSS, a Javascript and six images would not benefit from using an additional hostname, which would be justifiable if the resources became 12.

Minificazione Css/Html/Javascript

La minification it is nothing more than the operation of compacting the source code of the files to minimize the presence of white spaces and new lines. This intervention, combined with the compression, allows you to achieve a significant reduction in file size. A small and trivial example of minification is this:

First:

a{

color:#0027ee

}

After:

a{color:#0027ee}

Image optimization

The key points when it comes to optimization of images, are undoubtedly ratio maximization quality / size and the use of the best existing compression algorithms. A great tool to accomplish this is smush.com Yahoo, but also RIOT per i file JPG e TinyPng for PNG files are great tools.

Another very important operation when creating a Web page that uses images is to always use photos that have already been scaled and, therefore, of the size corresponding to the actual size displayed. Using a large image, 200 × 200, and including it on the page, resizing it to 100 × 100 via tags width e height, we will use a larger image than we need and, consequently, the time required for its download will be longer. Another important parameter is to specify with the tags just mentioned the exact size that avoids all unnecessary rendering operations.

Order of loading and reducing HTTP requests

When designing a page, the basic rules to take into account are:

- combine multiple Css files into one resource in order to reduce the number of Http requests (the same goes for JS);

- load first the css and then the Js as the latter are blocking while the former are parallelizable;

- if possible, include Javascript at the end of the page;

- avoid including inline Css and Js within the page;

- avoid including Css files using @import;

- serve static documents from domains that don't set cookies.

All these optimizations not only allow you to significantly reduce page loading times, because they minimize the number of Http requests that have a physiological time to be resolved, but also allow you to parallelize the download of the various resources to the mass.