As a matter of fact, with the same screen size, the viewing distance influences the perception of details. The further you move away from the screen, the less detail in the image you will be able to perceive. This consideration should always be kept in mind when choosing a new TV: what will my viewing distance be? and if the question of FHD or 4K resolution is becoming less and less important, thanks to the progressive disappearance of TVs with 1920 × 1080 resolution from the shelves of large shopping centers, soon, with the arrival of 8K TVs we will return to ask ourselves the same question: better focus on resolution or size?

Yet resolution and size aren't the only things that matter when it comes to viewing quality.

We do not reflect enough on this aspect, but unlike the world of PCs, where most of the time you are at a close distance from the screen, when you play with a console you do it perhaps sitting on the sofa or at a distance of at least two meters, not having the need to use the keyboard and mouse. At this distance, as shown in the table below (source Panasonic) to see the difference in detail between a Full HD (1920 × 1080) and a 4K (3840 × 2160) image, a screen of at least 55 "would be needed and if moves away at least 2,5m, a very common distance if you play in the living room, it takes even 65 ”to see a significant difference between a Full HD and an UltraHD signal.

If distance plays a fundamental role in the perception of details, this cannot be said for the perception of colors.

Our ability to recognize different shades of color has played an essential role in evolutionary terms.

Our eyes perceive radiation with a wavelength between 400 and 700 nanometers, which chromatically range from red to green to blue. Even the shades that have wavelengths between 490 and 570, which correspond to the different shades of green, are those that our eye is able to grasp with greater precision even at a very great distance.

The origin of the phenomenon is evolutionary; it is in fact thanks to our ability to distinguish colors, and in particular green in particular, that man and even before the primates have had a greater chance of survival, being able to more easily identify a predator or a prey among the foliage.

This example makes us understand the great importance that the color rendering of an image has and how much this, chasing the number of resolution, can be neglected in the choice of a TV or a monitor.

- If you are looking for the best gaming TVs, take a look here.

- If you are looking for a TV to use as a monitor, you can take a look at this guide.

- At this address, instead, our guide to G-sync and FreeSync monitors.

What is HDR?

High Dynamic Range (HDR) is an increasingly common abbreviation when buying a TV but often confusing marketing and the existence of different variants and abbreviations such as HDR10, HDR10 +, Dolby Vision and HGL complicate the lives of buyers.

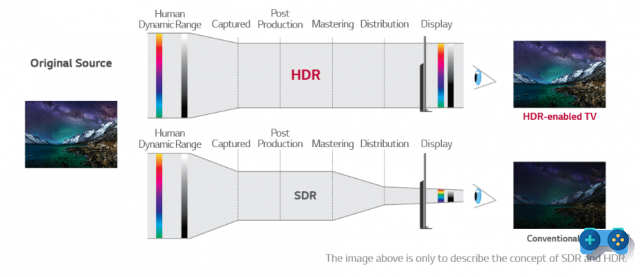

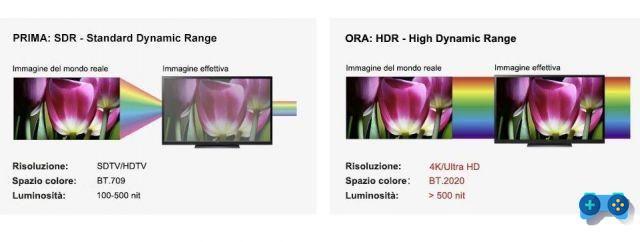

Until recently, TVs and monitors were based exclusively on a so-called color metric SDR. A Standard Dynamic Range display, in essence, uses a conventional EOTF curve (which is the math function that tells how much luminance to produce for each color), typically with 8 bits of color depth. In these cases the contrast ratio is limited to about 1.200 to 1. In SDR displays, the backlight is used simply to provide uniform light. The liquid crystal array is solely responsible for managing the color content. Since the backlight for the entire display is controlled at one level, which is often the whitest point in a given image, the darker content loses detail. In fact, to prevent the bright part from appearing devoid of details, the brightness is uniformly lowered and the darker part pays the consequences (and vice versa).

A High Dynamic Range, or HDR display, on the other hand, uses an EOTF curve that is extended on both ends of the light range and typically requires a minimum of 10 bits of color depth. In this scheme, it is quite easy to achieve contrast ratios well over 200.000 to 1.

In addition, in HDR displays, the backlight is often divided into smaller zones and individually controlled (local dimming). Combining the brightness control of these areas with the overlapping pixel data extends the contrast range.

HDR screens are meant to display a much wider range of colors and contrast, offering what is known as High Dynamic Range (HDR) whose standards were established on August 27, 2015 by the Consumer Technology Association (CTA) in the United States. .

Basically, TVs with HDR technology increase the contrast ratio and color space, in order to offer natural images, and more similar to what the human eye perceives in the real world. Wanting to give an example, an image of a rock in the shade or on one side and illuminated by the sun, would be very complicated to manage on an SDR screen, but by increasing the color space and the level of contrast it will be possible to offer a true representation of both the part in shade than the illuminated part, thanks to a greater range of color and contrast.

HDR currently with 10-bit panels on the market is able to reproduce 54% of the colors existing in the real world, and in the coming years it will go further with 12-bit panels, reaching up to 76%.

His arrival represented a very important turning point, comparable to the epochal one of the transition to high definition (from 480i to 1080p).

HDR10 - constant values

All HDR TVs on the market support the HDR10 standard. The suffix 10 derives precisely from the need to use a 10-bit panel (or in the worst case 8bit + metadata) for viewing this content. HDR is an open source standard, does not require the payment of licenses, and is therefore a standard supported by BD players, game consoles, streaming services. It takes advantage of a maximum brightness of up to 4.000 nits, (although current products are a long way from this value), and is capable of reproducing over a billion shades of color. The contents that support this format are many while its application takes place through the insertion of static metadata

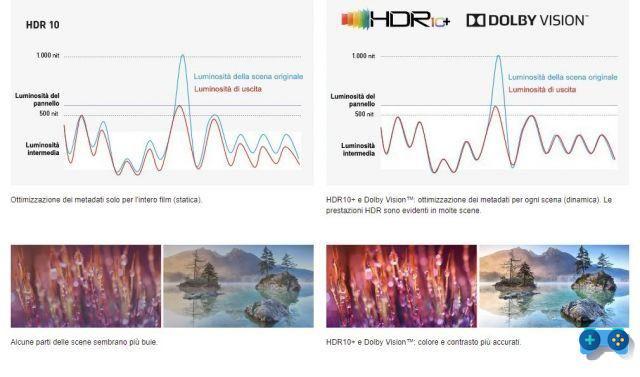

The metadata is information that accompanies the video and serves the TV to adapt (tone mapping) the information provided by the video stream to the hardware characteristics of the display. In fact, in the post-production phase, the grading of colors is carried out on the basis of a standard (BT.2020) which provides a huge range of color shades much higher than what is currently reproducible even on the most modern of displays. To make sure that this content is readjusted to the reproduction capacity of each display (expressed in nits, 500 nits, 600 nits, 1000nits etc.) the TV performs a remapping of the colors or if you want a compression of the dynamic range. If this were not the case, everything that the TV cannot reproduce because outside its dynamic range would be lost. The metadata is for this to provide the instructions to readjust those tones that he is unable to reproduce.

With HDR static metadata these instructions remain stable from the beginning to the end of the content.

This is a very important point, in practice with HDR10 and static metadata (HDR10), the TV adjusts the signal for the whole movie according to the scene with the highest brightness. HDR10 + and Dolby Vision, allow the TV to adjust the senale scene by scene (by sequence of frames) thanks to dynamic metadata. In this way, the screen more accurately associates the content with the characteristics of the panel in use and displays all the scenes with the optimal saturation and brightness.

Dolby Vision

Dolby Vision was the first answer to the static metadata problem. That is, the need to adapt the tone mapping to the different scenes, in order to offer an even more realistic representation of the dynamic range. DV supports dynamic metadata, as well as 12bit color depth, and panels with brightness up to 10.000 nits. Most of these values are still theoretical, as there are no 12-bit panels, and there will be no such high brightness (except with the arrival of Microled TVs), however the current use of dynamic metadata when well implemented offers a further qualitative leap.

This metadata contains scene-by-scene instructions that can be used by a compatible display to ensure that the content is reproduced as accurately as possible. Dolby Vision compatible TVs combine scene-by-scene information received from the source, adapting brightness, contrast and color rendition to the display specifications.

If you compare a Dolby Vision image with an HDR image, the former shows more detail in the bright areas, more balanced, nuanced, and natural colors across the dynamic spectrum; in 90% of the contents there is also a better management of the contrast range and therefore a greater sense of detail.

Dolby Vision, however, is a proprietary technology, its implementation requires the payment of the license to Dolby, and for this reason it is not easy to find medium / low-end TVs with this technology. However many content creators have embraced the Dolby philosophy and standard and among them many film studios such as Lionsgate, Sony Pictures, Universal Warner Bros, Netflix (Stranger Things) and Amazon Prime Video (Jack Ryan).

HDR10 +

Just like the Dolby Vision already described, HDR10 + is an evolution of the basic format, born in Samsung laboratories to provide a cheaper solution to Dolby Vision. The purpose of HDR10 + dynamic metadata is the same, varying the HDR rendering sequence by sequence, thus ensuring continuous optimization on brightness, contrast, and color gamut.

Despite being developed by Samsung, the implementation of the format is free and does not require the payment of royalties and thanks to the support of Amazon Prime video, 20th Century Fox, Panasonic and Philips its diffusion is progressively increasing.

HDR10 + vs. Dolby Vision

The visual rendering between these two formats is completely comparable, it all depends (as always) on the implementation of the content creators, today comparing the best sources available of the two formats there is no format that is qualitatively better than the other.

The most important difference between the two formats is that HDR10 + has a 10-bit color depth, compared to Dolby Vision's 12-bit. Today there are no 12-bit panels however the arrival in the near future of 12-bit panels would put Dolby Vision on another level, as it is able to exploit a dynamic range that is simply not possible on 10bit panels.

HLG: The HDR of TV broadcasts

An acronym for Hybrid Log Gamma, it is in practice HDR applied to television broadcasts and LIVE events, it was created by joint research between the British BBC and the Japanese broadcaster NHK.

HLG manages to combine standard dynamic range (SDR), and high dynamic range (HDR) in a single video signal, this feature for cable or satellite transmissions is essential, as each user can reproduce the signal, in HDR if the tv is suitable, and in SDR on old TVs, leaving no one behind.

Furthermore, this signal uses VP-9 or HEVC compression which limits the data sent and makes it possible to use current DTT technologies.

Not all HDR TVs, even if it is a hybrid format, recognize the HLG format. Starting from 2018, all TVs have begun to support the new format en masse, but for those prior to that date everything depends on the manufacturer and his willingness to update devices now considered obsolete via software.

The best HDR10 + and Dolby Vision TVs.

Now that we have seen what these technologies are, let's see which are the best TVs for value for money able to exploit them.

Best budget Dolby Vision TV

Hisense ULED H9G

When it comes to the best value TV, there are very few other brands that can offer the same visual quality as Hisense at a comparable price. The Hisense Uled H9G, presented at CES 2020 and available in our country from April (but very complicated to find) is a QLED with local brightness control at 180 zone, and peak brightness of 1000 cd / m2. The Quantum Dot filter allows the WCG support required by HDR, in this case HDR10 e Dolby Vision.

The panel is based on VA technology and has a native scan 120 Hz, while the audio section supports the Dolby Atmos. The processing is entrusted to the processor Hi-View Engine AI. The operating system is Android TV with Google Assistant support. It is not the perfect TV for gaming because it does not support VRR (variable refresh rate) but it has excellent response times and very low input lag.

Best TV for color accuracy

Sony XH95

Sony XH95 is one of Sony's flagship LED TVs from last year and as such has excellent out-of-the-box color accuracy, so you can enjoy HDR content as soon as it's out of the box, but at decidedly more accessible prices than the current top of the range.

It has one of the widest color gamuts for a TV in this price range and exceptional coverage of the most commonly used DCI P3 color space. It also has excellent peak brightness in HDR, making it perfect for playing HDR content. With its VA panel, it has deep blacks and has a full-array local dimming function. Again it is not the best option for gamers. Despite having a 120Hz refresh rate, it doesn't support any VRR technology, and its input lag is too high for competitive gaming. However, it has a great response time, so fast content isn't a problem. It has integrated Android TV. Choose it if you are fanatic of color accuracy and accuracy.

The best HDR10 + / Dolby Vision TV

LG OLED 65CX

When it comes to the best TVs from the point of view of visual quality, color accuracy, gaming functionality, just one name makes the eyes of LG OLED CX enthusiasts shine. LG's CX series is an excellent TV from all points of view and thanks to the OLED technology, there is no blooming around bright objects.

Displays an extremely wide color gamut with near-perfect coverage of the DCI P3 color space used in most content. It also has decent coverage of the larger Rec. 2020 color space, and out-of-the-box, it has great color accuracy. It is a good choice for use in bright rooms as it has exceptional reflection management. It is ideal in HDR games, thanks to a very low input lag, an almost instant response time and support for VRR (variable refresh rate) technology to reduce screen tearing. The only disadvantages are the possibility of burn in (however very remote for normal use by TV for games and movies) and the brightness not as high as LED TVs. This as we have seen can affect when it comes to HDR movies that require high luminance of the panels. In any case, all the other qualities amply compensate for this small disadvantage (we are talking about a peak brightness of around 850nits anyway)